BERT stands for ‘Bidirectional Encoder Representations from Transformers’ this phrase sounds so technical and reminds us of some terminology used in Sci-fi Hollywood movies right! But this is Google’s newest algorithmic update which has changed the users’ insights in real-time much more convenient while searching for any query.

This BERT Algorithm was introduced with the purpose of making the conversational search by users to understand better in their own natural language. To make it simple “BERT Algorithm helps Google understands search queries in a better way”. This technology formulates the best way to return results for billions of search queries. Among that 15 % of queries are ones that Google has not come across so far.

Also See: How Google’s FRED update become a game-changer for sites with low-quality content

Generally, Search is about understanding language and cracking queries. With the latest advancements in the science of language understanding made possible by machine learning. This breakthrough made a significant improvement in understanding queries in the past five years and one of the biggest leaps in the history of Search.

People sometimes might not know the right words to use, or how to spell something, because oftentimes, we come to Search looking which we don’t necessarily have the knowledge how, to begin with.

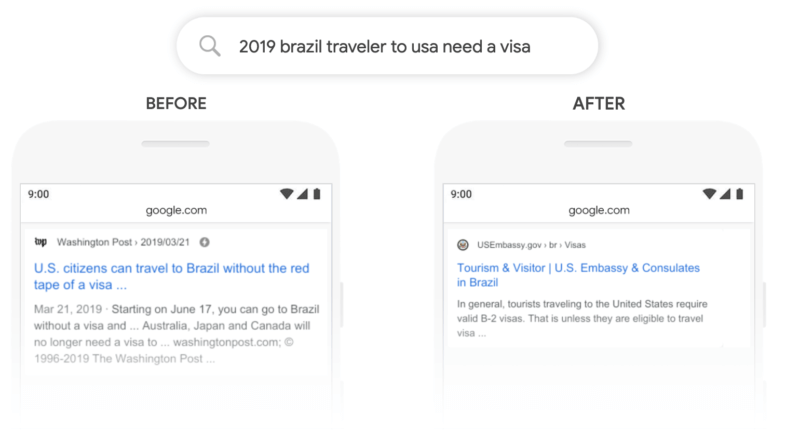

For a better example, consider the phrase – “How to decorate room gardening?” and Google provided results related to interior designing for the room. Although the word – “gardening” was purposely used. Till October 1, 2019, Google ignored the context “gardening” and provided results related to the room.

Google’s wonder – The BERT algorithm which was launched on October 25, 2019, has now understood the context of the word “gardening” is as important as “room” and focused the search results for gardening related web pages also. Google rolled out BERT worldwide to over 70 languages on 10th December 2019.

Therefore, the BERT model can consider the full context of a word by understanding the intent behind search queries where you can search for your queries in a way that feels natural or obvious for you.

Hope this article gives a clear view of Google’s BERT Update. Share your views on this by commenting below. And Subscribe our blog for more Digital Marketing Updates